File Structure

SCOPE/

├── dataset/

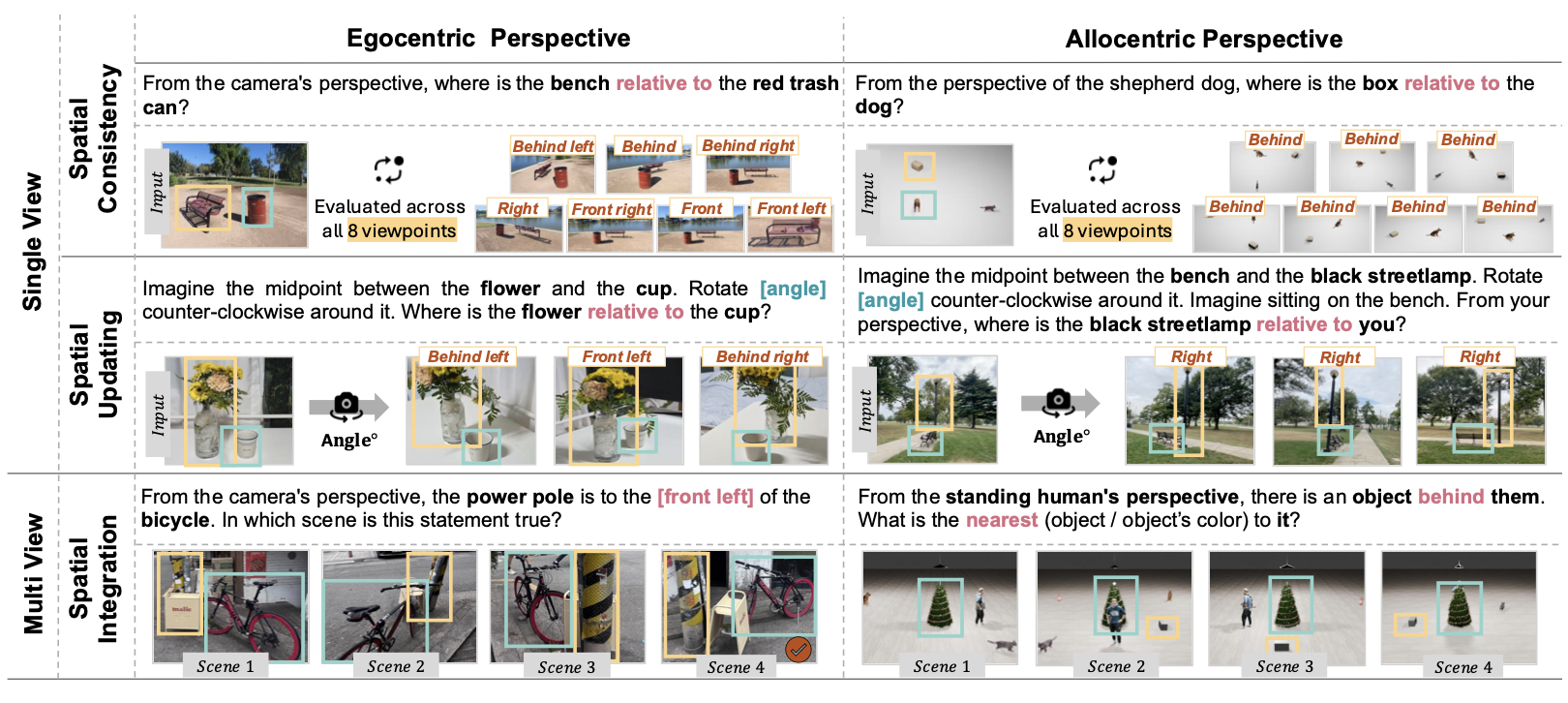

│ ├── task1.jsonl (Ego Spatial Consistency)

│ ├── task2.jsonl (Allo Spatial Consistency)

│ ├── task3.jsonl (Ego Spatial Updating)

│ ├── task4.jsonl (Ego Spatial Integration)

│ ├── task5.jsonl (Allo Spatial Updating)

│ ├── task6.jsonl (Allo Spatial Integration)

│ └── image/

│ ├── {scene_id}/

│ │ ├── frame_0deg.png

│ │ ├── frame_45deg.png

│ │ ├── ...

│ │ └── frame_315deg.png

│ └── occlusion/

│ └── {scene_id}/

│ └── frame_{deg}deg.png

├── evaluate/

│ ├── src/

│ └── scripts/

└── inference/

├── multi_integration/

└── viewpoint_invariance_and_spatial_updating/

Sample Entry

{

"image": "dl3dv_2/frame_0deg.png",

"camera_yaw_deg": 0,

"source_folder": "dl3dv_2",

"object_subject": "bench",

"object_reference": "trash can",

"question": "From the camera's perspective,

where is bench relative to trash can?",

"options": {

"A": "front",

"B": "behind",

"C": "left",

"D": "right"

},

"answer": "C",

"answer_text": "left",

"question_type": "mcq_4"

}